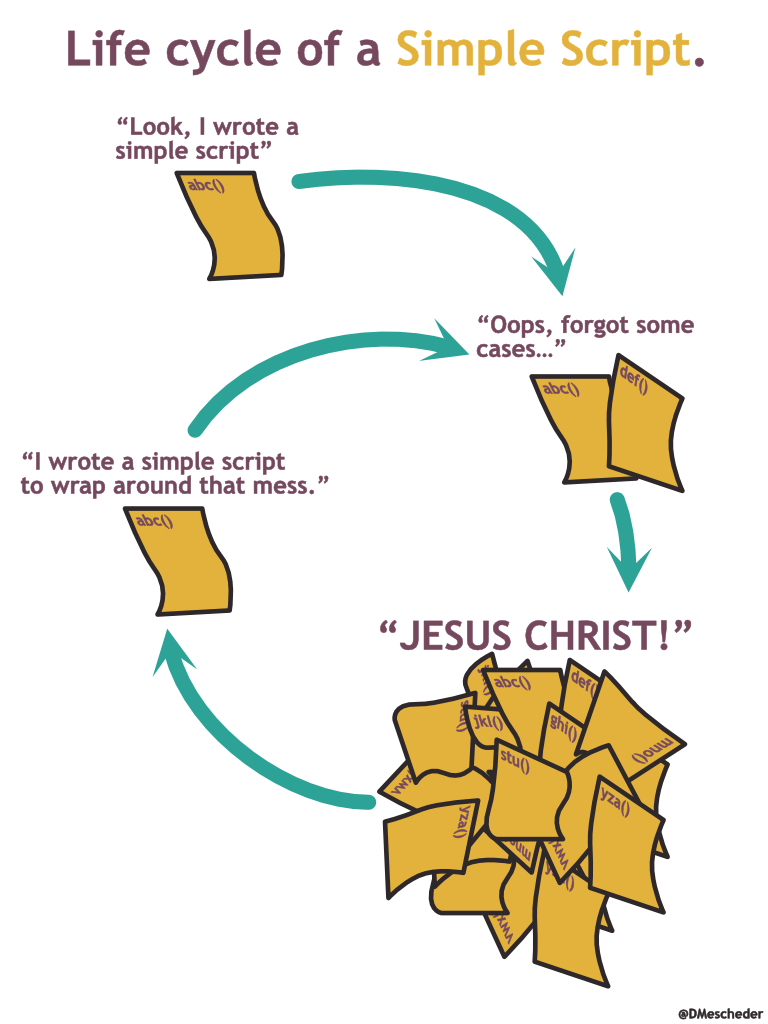

Something that has always bugged me about the current direction of the Continous Integration (CI) ecosystem is the reliance on centralized tools. The promise is to make the configuration simple. I would claim that even simple build scripts quickly fall victim to the old bash-script death spiral. There must be a better way!

Certainly, there may be solutions that I am not aware of, however many of the systems considered modern rely on some form of script or “simple configuration file” checked in next to your code to describe a build pipeline consisting of a sequence of steps. This file is read and interpreted by a central system that executes the appropriate steps.

This is fine as long your pipeline is some version of build/test/publish. However, in my experience, build descriptions tend to evolve in the same way that “short 10 line bash scripts” do: They get extended and grow with the growing needs of the project. Maybe you want to deploy a documentation package, set up a staging environment and run some smoke tests, employ a package tagging/promotion strategy, build different artifacts for different target architectures or compile a selection of downstream projects against a new version of a library? Whatever it is, it will necessarily increase the complexity of your CI build.

And while all of this is very achievable with existing CI frameworks, I think that every piece of non-trivial code should be testable locally. It feels wrong to have to push a build description to your VCS repository only to test whether the build works.

We don’t have this issue with other parts of a typical developer infrastructure: One of the big benefits of a system like git is that everything can be done locally and you only need to push to an agreed-upon place to share your work with the rest of the team. Tools like GitHub provide the added convenience of a graphical interface, but they are not required to use git and if they are down, your team should not normally be blocked by the downtime. The same cannot be said for your continuous integration server.

What could a decentralized CI system look like?

Let us first list what a CI system typically does:

- Monitor branches in a version control system.

- Automatically run builds once there are changes.

- Report build results back to a graphical version control system like GitHub, GitLab, or Bitbucket.

- Provide one reproducible build, not depending on the developer machine where it was created.

- Push artifacts either to some repository from where they can be deployed or directly deploy them.

- Keep a list of past builds with logs and timings to be inspected later.

When it comes to decentralizing these responsibilities, the first part that we need to solve is to reproducibly execute the build procedure. For reproducible execution in arbitrary environments, we now have a widely spread standard tool available: Containers. Thanks to containers it is not too much to ask that complex sequences of build and deployment steps including any custom logic that you would normally bake into a Jenkins pipeline be executable on a developer laptop.

Such a system could, like any other modern CI platform, offer a web interface that works on localhost just as well as on a remote server. To write the descriptions of the build pipelines it would make sense to offer libraries so that the build description could be written embedded into a fully functional programming environment, benefitting from all the available tooling.

The second obvious question that we need to address is the publishing of build artifacts and deployment. I cannot possibly suggest that any developer publishes and deploys artifacts from their local machine?!

And so what if we did allow this? Sure, you don’t want to accidentally push some local build to production. Though as long as this scenario is ruled out, being able to publish from a local machine does not sound so crazy anymore.

Instead of blocking publish and deploy steps when a CI run is executed locally, I propose that the CI system should be aware of the environment it ran in (”Daniel’s laptop”) and encode that information in the metadata of the produced artifact together with the version. Your production environment should have a safety filter so that it only accepts deployments coming from a particular environment. When the environment is not equal to the build server, the deploy step would decide to deploy to a test environment instead, running through the same code paths just with a different target.

This leads us to “having a central place for inspecting logs, build results, and timings”. While it is of course useful to have a central place which you can bookmark to have all build results available, it is easy to imagine a system where, like git, the central system is just yet another instance of a tool. This place just happens to receive a label. To get the same advantages of traditional build systems, it suffices to regard build metadata like logs, timings, test results, or code coverage as just one more artifact that your CI system produces. These can be packaged and published to shared storage like any other artifact. Then, all that is required is a graphical interface on top of this storage which any developer can either run locally or access at a central location to search and inspect these results.

Lastly, there is the feature to monitor branches which is probably the least interesting part of the proposed setup. It suffices to provide a daemon process that does just that and launches the dockerized build if a change is detected. Nothing should stop a developer to run this process locally, even though I suspect that this will have less value than being able to reproduce the full CI build on demand.

So in conclusion the proposed solution would consist of the following parts:

- A monitoring tool that tracks if there are any changes on one of the configured branches and if there are, it builds the docker container with the built environment and runs that container.

- A couple of libraries and tools to facilitate the creation of the build container (there are already tools that could serve this purpose).

- A metadata policy that makes the build environment an explicit part of an artifact’s meta information.

- A graphical interface to consult build logs and results.

- A mechanism to exchange build logs and results, for example by pushing them to a central repository like you would push any other build artifact.

With this in place, the central build system would just become one easily accessible place that runs the same processes that all developers run locally with the added extra that artifacts are tagged in such a way that deployment to production is allowed.

Sure, the initial setup is a bit more involved than a YAML file, but I feel more confident about the long-term maintainability of such a system.

Leave a comment