Famously, there is no really good way to measure code quality. Tales are being told of shops that lift metrics like code coverage to the status of a sacred yardstick that business decisions can be based on and the absurd consequences of such practices. The best real quality metrics that I have found so far are the ones proposed in Accelerate which have their own challenges and are more about team practices than about the code itself. In this piece, I would like to take a step back, wondering why we want to measure code quality in the first place. Maybe a metric is not the only solution.

Measuring code quality is one of these things that comes up from time to time as a request from decision-makers that have the very understandable desire to not be flying blind but also find themselves too far removed from the daily development work to get a realistic sense of the situation. Would it not be nice to have a figure that tells you exactly which projects constitute a risk and where you should direct your focus? Unfortunately, decades of experience have shown us as a field that it is not that simple. While there are some techniques like measuring test coverage that I would argue have a loose correlation with quality, these produce figures that can be misleading and hardly measure everything that you would consider quality. Analysis tools like Sonar are a decent attempt, but still, not all the figures coming out of such a tool are directly actionable and are hence likely to become either a vanity metric or to be swept under the rug.

I would like to change the focus of the discussion a little bit and go back to why you wanted to measure code quality in the first place. Your goal was likely not to produce a number. Instead, I would argue that you would like to get insights into the following questions:

- What is the cost of change in a given system? When adding a new feature, what is the friction caused by the existing code?

- How big is the risk that the system contains a critical bug or security vulnerability which will blow up in your face?

- If there is a critical problem while the core development team is at a beach somewhere, how long will it take you to recover?

We can see that code quality metrics are at least in part a risk management tool.

Fire Drills Mitigate Risk

Here’s another example of a risk management problem: What if your office building catches fire? Will you be able to evacuate in time? There are standards and best practices but you will typically not calculate a fire-safety metric for your company. Instead, you organize fire drills to simulate the emergency. This addresses the issue in two ways: You gain insights into bottlenecks or problems (“Employees in the bathroom did not hear the alarm”) and your team also practices the actions that need to be taken in a real emergency.

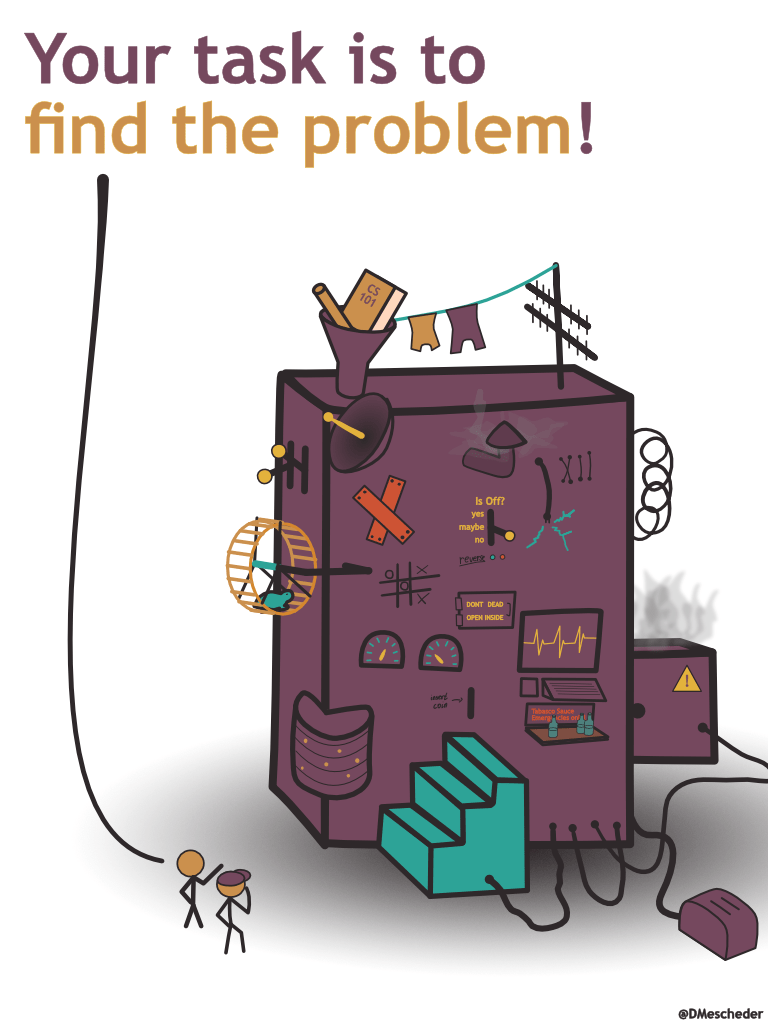

What if we translate this strategy to the problem at hand? Instead of trying to measure the quality of your code, try out what would happen in an emergency. Here’s an example of what this might look like: On Monday, the team responsible for the project hides a bug in its code and deploys the result to a test environment. Ideally, the problem should be something non-trivial like a race condition. On Tuesday, a team of 2-3 people from a different part of the organization gets called in and presented with a bug ticket describing the symptoms. Their task is to use nothing but the documentation available to them to set up the development environment, reproduce the issue, find its cause and propose a fix. This can be a fun exercise, feel free to provide snacks. On Wednesday you organize a post-mortem with both teams to discuss how it went and exchange ideas for improvements that would have made the lives of the emergency response team easier.

Depending on your concrete setup there are likely some details to be figured out. For instance, The commit in which the bug was introduced will likely be easy to spot in your version control history. So either the VCS history is off-limits for this exercise (not ideal because in a real emergency this would be an important source of information) or extra effort would need to be spent to hide the bug, e.g. create a copy of the repository in which you add the bug to some unrelated past commit. This in turn might be a bit too much of a hassle.

What’s important I think is that both teams take this as a learning exercise and not as something that they will be evaluated against (which is always a big risk of any metric). And yes, your managers will need to be able to correctly interpret the results of the exercise which will be more subtle than saying “the risk score on this project is 65%”. Then again, it is exactly this complexity and nuance that makes our field both challenging and interesting.